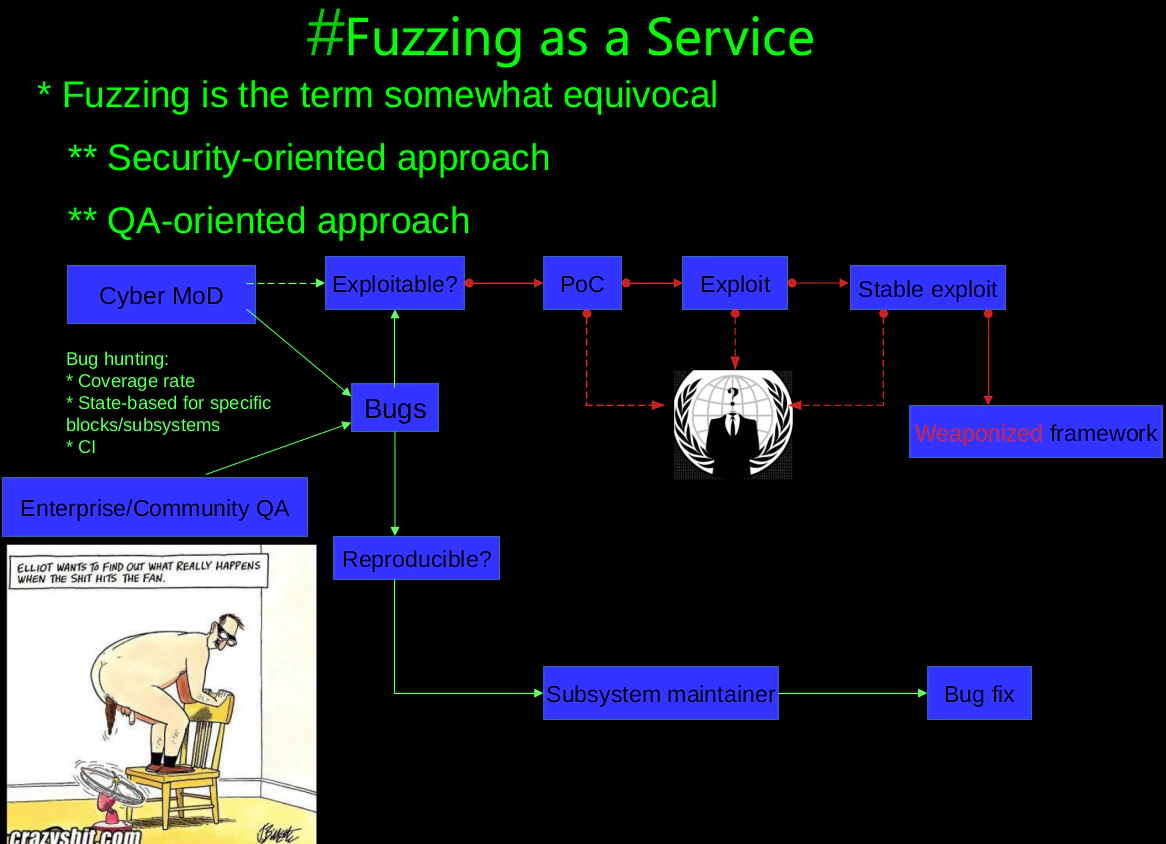

Technical analysis of syzkaller based fuzzers: It's not about VaultFuzzer!

0. VaultFuzzer

S0rry, VaultFuzzer is not the main player today. We’re going have little ride with Harbian-QA and GREBE today.

1. Harbian-QA

Code coverage is one of core features for operating system kernel fuzzer. FUZZER can give a certain priority according to the code covered by testcases, and guide Fuzzer to cover the code in the execution flow related to these code blocks. The code coverage is not flat due to the complex context of the kernel and coverage guided have face the various obstacles. For example, a conditional branch of function A() whose conditional satisfaction is assigned in another function B() but there is no function call relationship between A(), B(). The call relationship will be formed after being triggered by different system calls, __sanitizer_cov_trace_cmp() will collect data comparison in the conditional branch where the program runs to. However, in the kernel, the data for the conditional branch does not come directly from the parameter input of the system call, so this problem can’t be solved easily (According to the public fuzzing methods, it seems these dynamic data is not being utilized effectively).

An anonymous hacker reached to HardenedLinux and suggested that it was worth trying correlate the corpus with specific code paths in late 2017. The internal discussion lasted several weeks. HardenedLinux maintainers had a agreement that syzkaller as a generic framework still has a lot of room for improvement to make it suite for large-scale QA engineering/testing. Assumed that GCP engineers would have a same task for fuzzing the cloud production environment, it would indeed be possible to complete a more comprehensive QA engineering. HardenedLinux’s maintainer implemented a feature to collect structs and their values in some functions at the entry and exit points of the functions by eBPF in 2018 and shared with syzkaller community back in Jan 2019, though these data has not yet had any impact on the execution flow (because at the entry point) and been called it kernel state. HardenedLinux maintainers finished the PoC (proof of concept) and shared with syzkaller comunity. It turns out to be not suitable for QA engineer’s daily work because it needs extra work which is not possible for QA engineer. The general version of the implementation has finally completed in 2020 and the project was officially named to Harbian-QA, which is also the predecessor of VaultFuzzer. The 2020 version of Harbian-QA includes:

- Implement coverage-filter in Syzkaller and implement dynamic priority guided coverage system.

- Implement overwriting kernel structs via GetElementPointer by utilizing Clang/LLVM. The elements of instrumentation, and collection of code location, checksum of struct and field name, member assignments can be abstracted as kernel state.

- Tools that implement static analysis using Clang/LLVM to generate state tables of struct usage and CFG-based code block priority tables.

- Implement the interface reading state table and code block priority table on Syzkaller, so that Syzkaller becomes a code-coverage + state guided fuzzer.

- With the help of Syzkaller’s author and maintainer - Dmitry Vyukov, a more general version of cover-filter was merged into the Syzakller mainline code, while other features aren’t merged into Syzkaller due to serveral reasons, e.g: difficult to maintainer, the consistency of the syzkaller framework, etc.

2. Syzkaller

A discussion “Learning-based Controlled Concurrency Testing” in 2021 and it involved some topics and state-based fuzzer is one of them. Dmitry’s CC to harbian-QA’s maintainer and it’s worth noting that Vegard Nossum proposed a way to collect data which are accessed in the discussion. Vegard’s approach is hashing structs and their members and then he released a PoC prototype implemented as GCC plugin less than 24 hours. This approach is similar to the instrumentation-based Harbian-QA released in 2020 but only collects hash that accesses the struct and its member name. Vegard Nossum seems only targeting on how to trigger the concurrency errors effectively, which is different from the Harbian-QA design goal.

3. GREBE: kernel-object-driven fuzzing - A new fuzzer with explanatory technical terms as its core feature?

In a recent paper, “GREBE: Unveiling Exploitation Potential for Linux Kernel Bugs,” GREBE authors claimed that they had developed a “[kernel-object-driven]” (https://zplin.me/papers/GREBE_slides.pdf)" approach to fuzzing:

we implement this approach as a kernel-object-driven fuzzing tool and name it after GREBE.

Readers can read the paper, which are briefly described here:

- Use the GCC plugin to collect struct access as feedback.

- Static analysis of the structure and uses of the structure using Clang/LLVM.

- Use Syzkaller to explore the potential multiple behavior of the same bug based on code coverage and struct access.

This “approach” is basically used all public research mentioned above.

| Name | Harbian-QA | Vegard’s PoC | GREBE |

|---|---|---|---|

| Instrumentation | Clang/LLVM struct-field name checksum, value and address | GCC plugin hash of struct field | GCC plugin struct field information |

| Static analysis | Clang/LLVM: analyse usage frequency of struct field | —– | Clang/LLVM: analysis of dereference status for struct |

| Design Goal | Guiding the syzkaller hits the struct members in higher frequency to triger the potential bug | Trigger the concurrency bug | Explore crash cases in same object occurs in different locations |

- If you agree that “the hash or checksum of the struct-member” is an object’s information, then you should agree that Harbian-QA and Vegard’s approach is object-driven (although Vegard only completed the instrumentation).

- If you agree that “exploring the same code of crash/corrupt cases in different places of the same object” with the potential to cause “trigger concurrency errors” such as UAF, then you’re probably agree that GREBE’s goal is basically the same as Vegard’s. That is to say, what GREBE provides is not a new type of fuzzer, but an experiment to explore some special use-case by “borrowing” the kernel fuzzer approach that has been publicly available for years.

Either Harbian-QA or Vegard’s apporach proved that kernel fuzzer might need other data (other than code coverage) as feedback for further improvement but data-only drive is almost impossible due to the drawbacks of the instrumentation can’t detect all the potential behavior on data operation which benefits the fuzzer. It may be difficult than code coverage for code path exploration. The current (and usable) fuzzers are all based on code coveraged guided while the data feedback mechanism can be used as a complement.

3.1 A bug’s multiple error behaviors

We haven’t done much research on corrupted cases that triggering the same object in different places, but we have had some similar hands-on experience. CVE-2021-26708 PoC/Exploit published by Alexander Popov and he mentioned about the standard version of syzkaller can not reproduce the vulnerability. We tried the same vulnerability a few months ago and it can be reproduced quickly and found the total of 3 corruptions within 15 mins. It’s not clear whether syzkaller has improved the description of the system call but one thing should be noted that syzkaller has been incorporated with Harbian-QA coverage-filter in our reproducible steps. You can simply specify a path to target in the configuration, such as “net/vmw_vsock/. With coverage filtering, syzkaller’s computing resource is concentrated on a subsystem, and the generated testcases are all exploring the possible behavior of the subsystem, including the “feature” so-called “A bug’s multiple error behaviors” that exist in the subsystem. This may be a side-effect of Harbian-QA, for example, it’ll take longer for the specific subsystems with bloated code if the user decide not to shrink the target range of fuzzing, with which the case will reduce the benefit/effect.

Simply put, with coverage filtering, syzkaller’s computing resource is concentrated on a subsystem, and the generated testcases are all exploring the possible behavior of the subsystem, including the so-called “A bug’s multiple error behaviors” that exist in the subsystem. This effect may be an accident, for example, when there is too much code in the subsystem and you are not willing to shrink the target range of fuzzing, it will also reduce the effect and take longer.

GREBE did not abandon code coverage, and it’s foreseeable that using object only would cause great difficulties for fuzzer. since the type and number of object-related coverage in each individual code block does not change with the execution flow, that is, it is just an object-related pseudo-coverage to increase the priority of a code block related to the execution flow in the fuzzing process of syzkaller, so as to explore more of this type of object block.

GREBE design doesn’t sound, such as object information without assignment as feedback. You can assign priority to code blocks based on static analysis by utilizing Harbian-QA’s weighted-PC function that is not merged into syzkaller’s mainline. It provide users with an interface to implement dynamic code block weights to guide syzkallers to increase code coverage and stress testing for the target area more effectively (the point here is the idea of guiding fuzzer to generate different testcase to go through this covered block), including adding more code block weights to object operations. What is needed to explore the behavior of object is a static analysis tool to confirm that a code has several target object operations. Any type of instrumentation being introduced into the toolings involves the code changes, maintainence and performance hits for both compiler and kernel. Unfortunately, it’s impossible to avoid the static analysis tools. From our experiences, Clang/LLVM is difficult to correspond a block in an IR layer to a block on the actual address of the binary after linker’s work, while GCC is comparatively easier to implement.

3.2 Feedback with data flow trace

The above form of object feedback is just a pseudo-coverage with adjusting the weights of code blocks, and there is a fundamental difference to the state feedback. Dynamic data feedback should be:

- Even if the execution flow is the same, the assignment of the target variable may be different.

- The target variable has an effect on subsequent execution streams.

__sanitizer_cov_trace_cmp is a real-life example, which can be used to determine how far the conditional branch is away from being satisfied (there will be no changes in the execution flow until it is satisfied). However, especially in the kernel, the satisfaction of conditional branches is not directly affected by the input of the system call, but may be affected by the state caused by previous system calls. Harbian-QA has developed the feedback mechanism based on the assignment for the struct because data referenced repeatedly in multiple codes is often implemented as structs in the kernel. Harbian-QA is able to use such mechanism to handle, for exmaple, “sk->sk_state == TCP_CLOSE”, such code will be passed to the fuzzer as a state and it will not affect the subsequent execution flow of close() system call but will affect the kernel execution flow triggered by subsequent system calls. The testcase generated by the fuzzer achieves its own various states and can add/corelate more system calls base on these states.

In the practice of Harbian-QA, it tried an approach that encourages kernel fuzzing to overwrite objects to achieve more complex context for testcase. Unlike the feedback of the aforementioned assignment, only when a field of struct gives a new value, it is eligible to become a new state, so it is necessary to implement an instrumentation to dynamically obtain the relevant value. On the other hand, static analysis seems a better option if it could obtain the information as instrumentation because the changes in all aspects are much smaller. The action of accessing structs ignored by Harbian-QA back then, but both Vegard’s PoC and GREBE mentioned about this point.

In our various successes and failures, the feedback from data flow trace may have more use-case and may become a very important part of the fuzzing process.

4. Conclusion

Either HardenedLinux’s Harbian-QA or HardenedVault’s VaultFuzzer is designed to solve the real-life problem for data center. It doesn’t make sense if the security (or other fields of computer science) research can’t benefit the production/user. Various issues must especially be taken into account in QA engineering.

On the other hand, Vault Labs contacted HardenedLinux’s former full-time maintainer to confirm that Dongliang Mu, one of the authors of the GREBE paper, has been following the progress of Harbian-QA for a long time and he sought help from HardenedLinux for Harbian-QA content. Dongliang Mu is also active in the syzkaller community and he’s likely also read Vegard’s PoC. Unfortunately, GREBE,after replacing these terminology and explanation, claims that its design is a whole new type of kernel fuzzer that is done by GREBE team. GREBE paper spends a lot of time describing the reasons why other Fuzzers can not meet its use-case, while the whole paper does not cite Harbian-QA nor cites Vegard’s PoC. Well, we are not quite sure how modern academia works although, but with common sense that we believe this “copy + paste + replace” without giving any citation/reference/credit should be intolerable in Plato’s time. RR firmly believes that HardenedVault should remain “We’re neither academia bitch nor industry leech.” It wasn’t the red pill choice, was it?